Build a Web App to Chat with Scanned Documents Using ChatGPT and LangChain

Large language models (LLMs) are emerging which can help us in tasks like text summarization, translation, question answering, etc and OpenAI’s ChatGPT is one of the state-of-art models which is available via its web or API interfaces.

ChatGPT can be useful for document management. One use case of it is to answer questions with the document providing the background knowledge.

In the previous article, we’ve built a web application to scan documents and run OCR with Dynamic Web TWAIN and Tesseract.js. In this article, we are going to add the ability to chat with scanned documents to it.

What you’ll build: A JavaScript web application that scans physical documents, extracts text via OCR, and lets you ask natural-language questions about the content using the ChatGPT API and LangChain.

Key Takeaways

- LangChain’s vector store lets ChatGPT answer questions grounded in your scanned document text, reducing hallucination.

- Dynamic Web TWAIN handles scanner hardware integration in the browser; Tesseract.js performs client-side OCR on the scanned images.

- Splitting OCR text into chunks with

RecursiveCharacterTextSplitterand embedding them via OpenAI produces a searchable knowledge base for Q&A. - This approach works for any text-heavy scanned document — release notes, legal contracts, news articles — where you need targeted answers rather than manual reading.

Common Developer Questions

- How do I use ChatGPT to answer questions about scanned documents in a web app?

- How do I create a vector store from OCR text using LangChain and OpenAI embeddings in JavaScript?

- Why does ChatGPT hallucinate answers without document context, and how does RAG fix it?

Prerequisites

- Node.js installed

- An OpenAI API key (for embeddings and ChatGPT)

- A working document scanning + OCR setup from the previous tutorial

- Get a 30-day free trial license for Dynamic Web TWAIN

Build a ChatGPT-Powered Document Q&A with LangChain

LangChain is a library aiming to assist in calling LLMs. We can use it to let ChatGPT answer questions with scanned documents providing extra knowledge. Here are the steps to use it in a web application:

Step 1: Install LangChain

npm install langchain

Step 2: Create a Vector Store from Scanned Document Text

A Vector Store stores memories in a VectorDB and queries the top-K most “salient” docs every time it is called. It can be used to provide related background knowledge for a chat.

-

Create an OpenAI embeddings model.

// Create the models const embeddings = new OpenAIEmbeddings({openAIApiKey: apikey}); -

Use

RecursiveCharacterTextSplitterto split the text into split documents.const splitter = new RecursiveCharacterTextSplitter({ chunkSize: 4000, chunkOverlap: 200, }); const docs = await splitter.createDocuments([text]); -

Create a Vector Store using the split documents and the embeddings model.

const store = await MemoryVectorStore.fromDocuments(docs, embeddings);

Step 3: Start a QA Chain to Answer Questions over Documents

-

Create a new OpenAI model.

const model = new OpenAI({openAIApiKey: apikey, temperature: 0 }); -

Create a new QA chain.

const chain = loadQARefineChain(model); -

Select the documents related to the question from the Vector Store.

const relevantDocs = await store.similaritySearch(question); -

Call the chain to answer the question over the selected documents.

// Call the chain const res = await chain.call({ input_documents: relevantDocs, question, });

Test the Results with Real Documents

Next, let’s test if it works well. Here, we test two example documents.

Example 1 - Release Notes of Dynamic Web TWAIN

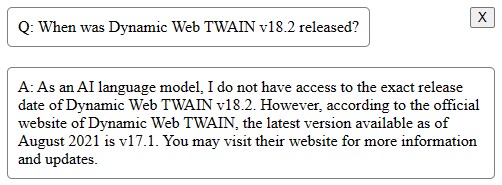

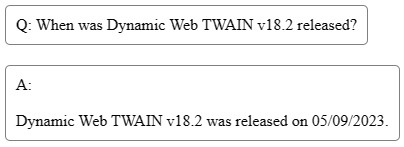

Suppose we would like to know when the v18.2 version of Dynamic Web TWAIN was released.

If we don’t use the document as the background knowledge, it will produce the following answer:

After using the document, it can correctly answer the question:

Example 2 - BBC News about World Snooker Championship

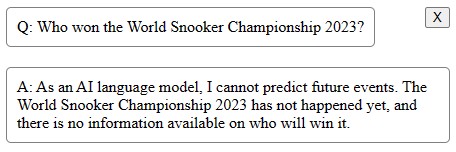

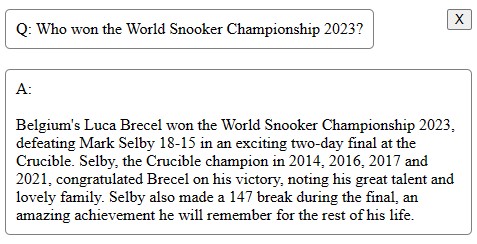

Suppose we would like to know who won the World Snooker Championship 2023.

If we don’t use the news as the background knowledge, it will produce the following answer:

After using the news, it can correctly answer the question:

Known Limitations

ChatGPT sometimes makes up things which are not true. Providing relevant document context via the vector store reduces but does not eliminate hallucination.

Common Issues and Edge Cases

- OCR quality affects answers: If the scanned image is skewed, blurry, or low-resolution, Tesseract.js may produce garbled text. Pre-process scans (deskew, crop, adjust contrast) before running OCR to improve ChatGPT’s answer accuracy.

- Token limit exceeded on large documents: Long documents may produce chunks that exceed the OpenAI model’s context window. Reduce

chunkSizeinRecursiveCharacterTextSplitter(e.g., from 4000 to 2000) and increasechunkOverlapto ensure no content is lost. - API key exposed in client-side code: Never ship your OpenAI API key in production front-end code. Use a backend proxy or serverless function to forward requests to the OpenAI API securely.

Source Code

Checkout the source code of the demo to have a try: