A Deterministic Benchmark for Barcode SDKs on iOS

Why This Benchmark Matters

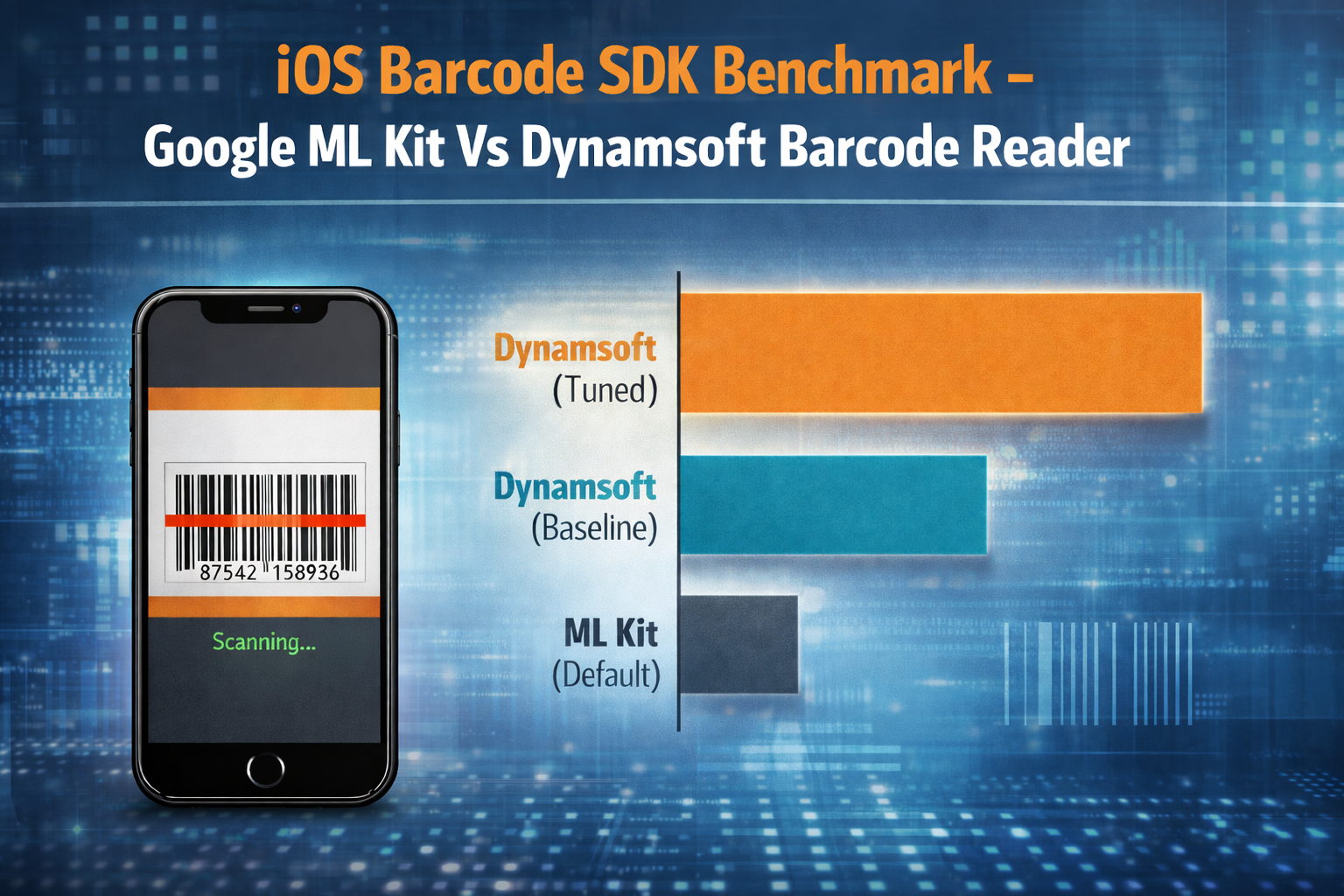

Choosing the right barcode SDK impacts scan success, user experience, and operational efficiency. This benchmark compares ML Kit and Dynamsoft on iOS under real-world conditions to highlight trade-offs between speed and detection accuracy.

Test Setup: Devices, SDKs, and Dataset:

- Hardware: iPhone 13 (Standard)

- OS: iOS 17+

- Comparison Targets: Google ML Kit vs. Dynamsoft Barcode Reader (DBR)

Dataset Design and Real-World Scenarios

The dataset includes six real-world scenarios such as motion blur, distance, reflections, rotation, damaged codes, and multi-barcode frames – designed to reflect common scanning challenges in production apps.

The dataset includes a variety of symbologies and formats tailored to specific environmental challenges. The first five clips each feature a single barcode—ranging from EAN-13 and QR Codes to ITF—subjected to individual stressors like blur, distance, or rotation. The final clip increases the detection difficulty by introducing a multi-code scenario, with two barcodes of different formats present in the same frame.

Methodology: How Performance Was Measured

Deterministic Frame Sampling

Frames are extracted using a fixed sampling policy to maintain deterministic coverage:

| Parameter | Value |

|---|---|

everyNFrames |

5 |

maxFrames |

60 |

clipTimeoutSeconds |

120 |

Parity Track

In the parity track, both SDKs are evaluated under identical conditions:

- Same extracted frames and image preprocessing.

- All barcode formats enabled.

- No confidence filtering.

SDK Configuration Notes:

ML Kit was tested using default settings. Dynamsoft was evaluated in two modes: a standard configuration and an optimized setup designed to improve detection accuracy in challenging conditions.

Note on Latency: Parity track latency measures the synchronous per-frame decode cost using a single-image API. Neither SDK would typically use this synchronous approach in a production camera scanner—both provide asynchronous streaming pipelines with internal frame pacing. These numbers reflect decode difficulty per frame, not achievable camera FPS.

Results — Accuracy and Latency Comparison

Aggregate Performance Metrics

| SDK | Recall | Precision* | Mean Latency (ms) |

|---|---|---|---|

| ML Kit (Default) | 0.42 | 0.50 | 13.07 |

| Dynamsoft (Baseline) | 0.75 | 0.83 | 31.25 |

| Dynamsoft (Tuned) | 1.00 | 1.00 | 172.82 |

* Precision note: Recall and precision are macro-averaged across clips. For clips where an SDK returned no detections at all, precision is recorded as 0 for that clip. Treating it as 0 penalizes abstention the same as a false positive.

Multi-Code Detection Performance

The final clip, wide_to_close_dm, provided a key differentiator. It contains two barcodes: a prominent central code and a smaller, secondary code at the edge of the frame.

Both ML Kit and the Dynamsoft Baseline configuration achieved a 0.5 recall here; they successfully captured the primary center code but failed to localize the secondary one. Only the Dynamsoft Tuned configuration successfully decoded both, demonstrating that high-performance recall also involves the ability to resolve multiple signals in a complex scene.

Key Insights for Product Teams

The results show that ML Kit is highly efficient for “easy” codes, but its recall drops significantly under stress or when multiple codes are present. Dynamsoft’s “Tuned” latency is notably higher per-frame, but in practice higher per-frame recall may reduce the number of scan attempts a user needs — rather than hunting for the right angle or isolating a single code, the SDK can resolve the entire frame in one pass. This is a qualitative observation, not a measured end-to-end metric; a formal time-to-task study would require a different experimental design.

Appendix: Detailed Performance by Scenario

The table below provides the full breakdown of expected vs. detected results. A recall of 0.5 in the final row indicates that only one of the two expected codes was successfully read.

Column definitions:

- Unique Codes — the number of distinct barcodes expected in the clip’s annotation file.

- Latency — mean per-frame decode latency, computed only over frames where at least one barcode was detected. A value of “–” indicates no detections occurred, so no latency was recorded.

| Clip ID | Unique Codes | Expected Text | Format | ML Kit Recall | ML Kit Latency | DBR Base Recall | DBR Base Latency | DBR Tuned Recall | DBR Tuned Latency |

|---|---|---|---|---|---|---|---|---|---|

| EAN_13-distance | 1 | 3605972842046 | EAN_13 | 0.0 | – | 1.0 | 33ms | 1.0 | 351ms |

| EAN_13-motion-blur | 1 | 3605972842046 | EAN_13 | 1.0 | 15ms | 1.0 | 29ms | 1.0 | 272ms |

| damaged_qrcode | 1 | A-51 | QR_CODE | 0.0 | – | 1.0 | 25ms | 1.0 | 136ms |

| reflective_surface | 1 | DM-QRBatch223 | QR_CODE | 0.0 | – | 1.0 | 52ms | 1.0 | 146ms |

| rotated_vertical_ITF | 1 | 1045678901234 | ITF | 1.0 | 14ms | 0.0 | – | 1.0 | 85ms |

| wide_to_close_dm | 2 | 123457347 | ae-1534 | UPC_A | DM | 0.5 | 10ms | 0.5 | 18ms | 1.0 | 47ms |

Reproducibility and Benchmark Design

Each run exports:

- CSV output for analysis.

- JSON output for machine-readable metadata and traceability.

Given the same dataset, sampling settings, and iPhone 13 hardware, the benchmark is designed to produce repeatable results.

References

Dataset

https://github.com/chloe-dynamsoft/datasets-from-dynamsoft/tree/main/video-based-testing/single-code

Benchmark Implementation

https://github.com/chloe-dynamsoft/benchmark-mlkit-dbr

Disclosure

This benchmark was authored by a team affiliated with Dynamsoft. The methodology, dataset, and source code are published in full so that results can be independently verified and reproduced. Readers are encouraged to run the benchmark with their own datasets and draw their own conclusions.

Notes

The dataset and source code are included as references so the benchmark setup can be reviewed and reproduced more easily. Additional datasets can also be substituted to evaluate behavior under different environmental conditions.

Blog

Blog